Searching for Planets That Formed a Long Time Ago in a Galaxy Not So Far Away

Editor’s Note: Astrobites is a graduate-student-run organization that digests astrophysical literature for undergraduate students. As part of the partnership between the AAS and astrobites, we occasionally repost astrobites content here at AAS Nova. We hope you enjoy this post from astrobites; the original can be viewed at astrobites.org.

Title: Constraining the Planet Occurrence Rate around Halo Stars of Potentially Extragalactic Origin

Authors: Stephanie Yoshida, Samuel Grunblatt, and Adrian Price-Whelan

First Author’s Institution: Harvard University

Status: Published in ApJ

More than 5,000 planets have been discovered orbiting stars in the Milky Way, but astronomers have not yet confirmed detections of any planets in other galaxies. Most known exoplanets reside within a few thousand light-years of the solar system (less than the distance from us to the center of the Milky Way), so how can we find exoplanets at extragalactic distances? Today’s article describes a search for planets that formed in a dwarf galaxy that has since merged with the Milky Way.

(Not) Finding Extroplanets

A few extragalactic exoplanet, or “extroplanet,” candidates have been identified in the past, though none have been confirmed yet due to the observational difficulties of following up detections that far away. For example, in 2010, researchers announced the discovery of a planet orbiting HIP 134044, a star left over from a small galaxy that the Milky Way absorbed. Further study has since refuted this claim, noting errors in the analysis, and there is no longer evidence that such a planet exists. Today’s article continues the streak of not discovering extroplanets, but the authors invoke a statistical analysis to calculate how common planets may be around halo stars of extragalactic origin.

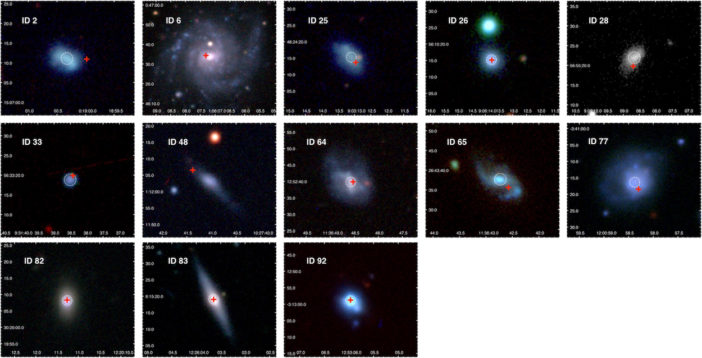

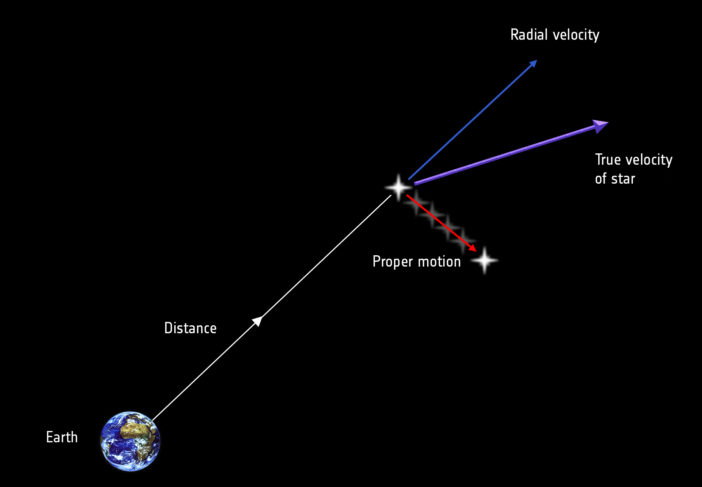

In the Milky Way’s outer halo, there is a unique population of stars with motions and elemental abundances that are different from stars that formed in the Milky Way. These stars are believed to have formed in a dwarf galaxy called Gaia–Enceladus that merged with the Milky Way 8–11 billion years ago. The authors of today’s article used measurements of stellar motions from the Gaia satellite’s second data release to identify stars moving in ways inconsistent with stars that formed in the Milky Way. These Gaia–Enceladus stars tend to have low or negative rotational velocity in the frame of the Milky Way, unlike typical Milky Way stars that rotate in the disk. The authors also set limits on stellar magnitude, color, and radius, combining Gaia and Transiting Exoplanet Survey Satellite (TESS) data to select low-luminosity red giant branch stars for this study. This allowed them to make direct comparisons to a previous study of similar stars with Kepler data.

TESS is searching for exoplanets that pass in front of their stars, periodically causing the stars to appear dimmer. The authors produced light curves for their sample of 1,080 stars from TESS images and searched them visually for any of these transit dips. No planet candidates were identified, so today’s article focused on using this non-detection to put a limit on how common planets could be around the stars in their sample.

Upper Limits

While a non-detection may sound disappointing at first, it can still teach us something. By calculating a study’s “completeness,” or what fraction of such objects it could detect, researchers can use a non-detection to place a constraint, or upper limit, on how common the objects may be.

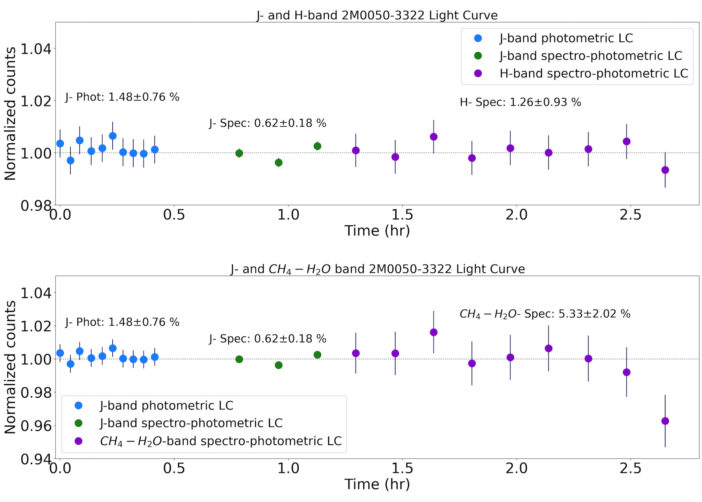

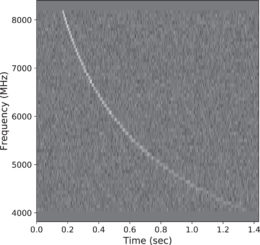

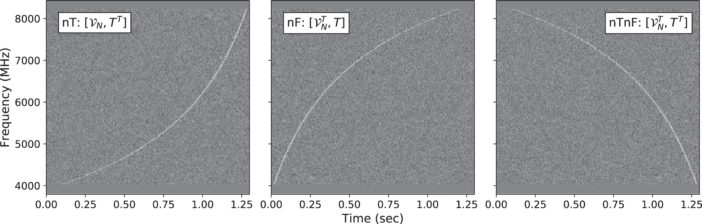

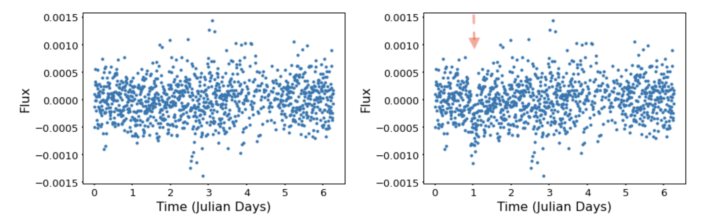

The authors used an injection-recovery method to calculate the completeness of their search. They inserted simulated transit signals into their light curves (example in Figure 1), then used a Box Least Squares search to try to identify the signals within some precision in orbital period and transit depth. They found that roughly 30% of their injected signals were recovered, that the recovery rate was highest for planets with short periods and large radii, and that planets smaller than half the size of Jupiter were essentially undetectable.

Figure 1: Left: TIC455692967’s observed light curve. Right: TIC455692967’s light curve with a simulated transit signal (marked with the red arrow). [Yoshida et al. 2022]

Takeaways

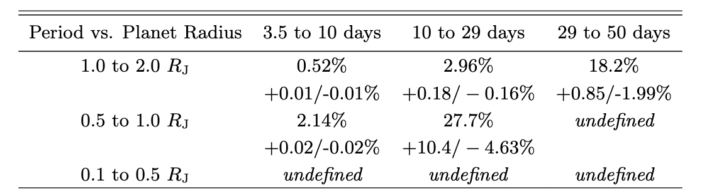

The final upper-limit calculation found that less than 0.52% of low-luminosity red giant halo stars should host hot Jupiters (planets similar in size to Jupiter with orbital periods of 10 days or less). This finding agrees with a previous estimate that put the occurrence rate at roughly 0.5%. The occurrence rate of hot Jupiters correlates with stellar metallicity, generally measured as the ratio between iron and hydrogen abundances in the star. Halo stars typically have very low metallicities, suggesting that hot Jupiters should be about ten times rarer around halo stars than around other stars in the galaxy.

These upper limits are only the maximum possible occurrence rate, so these planets may be even less common than the percentages given in Figure 2. How will we actually find these rare planets of extragalactic origin? By studying more stars! The recent third data release from Gaia and ongoing TESS observations are moving astronomers in the right direction.

Original astrobite edited by Sahil Hedge.

About the author, Macy Huston:

I am a fourth-year graduate student at Penn State University studying Astronomy & Astrophysics. My current work focuses on technosignatures, also referred to as the Search for Extraterrestrial Intelligence (SETI). I am generally interested in exoplanet and exoplanet-adjacent research. In the past, I have performed research on planetary microlensing and low-mass star and brown dwarf formation.